What the Luddite movement can teach us about the age of artificial intelligence

AI is amazing.

It writes.

It draws.

It codes.

It summarizes reports, creates music, designs images, and answers questions in seconds.

At first, it feels like magic.

Then, slowly, another feeling appears.

Fear.

Not the dramatic kind of fear we see in science fiction movies, where robots take over the world overnight. This fear is quieter. It sits somewhere in the back of our minds while we use ChatGPT, Midjourney, Claude, Gemini, or whatever new AI tool appears tomorrow.

It asks:

“If AI can do this, what happens to me?”

For writers, designers, translators, programmers, marketers, teachers, lawyers, accountants, and many others, this question feels personal.

Because AI is not just replacing muscle.

It is touching something we thought made us special: our intelligence, our creativity, our judgment, our ability to make meaning.

But this fear is not new.

About 200 years ago, workers in England felt something strangely similar. They were called Luddites. At night, they entered factories and smashed machines.

For a long time, history mocked them as foolish people who hated technology.

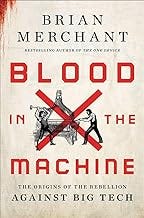

But Brian Merchant’s book, Blood in the Machine, tells a more interesting story.

The Luddites were not simply afraid of machines.

They were afraid of a world where machines created wealth, but ordinary people became poorer.

That is why their story matters today.

Because AI is not only a technology story.

It is also a story about power.

The Luddites Were Not Just “Anti-Technology”

When most people hear the word “Luddite,” they think of someone who hates new technology.

Someone who refuses to use a smartphone.

Someone who still prints emails.

Someone who says, “I don’t trust these new machines.”

But the real Luddites were not that simple.

The Luddite movement began in early 19th-century England, during the Industrial Revolution. Textile workers had spent years learning their craft. They knew how to make cloth with skill and care. Their hands were their livelihood. Their knowledge fed their families.

Then machines arrived.

New frames and looms could produce cloth faster and cheaper. Factory owners no longer needed as many skilled workers. They could hire lower-paid workers to operate machines and produce more goods at a lower cost.

To the factory owners, this was progress.

To the workers, it was disaster.

Their wages fell.

Their bargaining power disappeared.

Their skills lost value.

Their future became uncertain.

So they fought back.

They broke the machines that were being used to destroy their livelihoods.

That is the part history often remembers.

But the deeper question they asked is often forgotten:

“If machines create more wealth, why are the workers becoming poorer?”

This is the heart of the Luddite story.

They were not fighting technology itself. They were fighting the way technology was being used.

They were fighting a system where the benefits went upward, while the pain stayed with ordinary workers.

AI Is the New Machine

The machines of the Industrial Revolution replaced human hands.

AI is different.

AI reaches for the human mind.

A loom could weave fabric.

AI can write an essay.

A spinning frame could produce thread.

AI can produce code.

A factory machine could speed up physical labor.

AI can speed up thinking, planning, designing, translating, analyzing, and creating.

That is why today’s anxiety feels so wide.

It is not only factory workers who feel threatened.

Writers wonder if their words will still matter.

Artists wonder if their style has already been copied.

Programmers wonder if junior coding jobs will disappear.

Teachers wonder how students will learn when AI can answer everything.

Lawyers and accountants wonder how much of their work can be automated.

Office workers wonder whether “productivity” is just another word for needing fewer people.

The question is not always:

“Will AI completely replace me?”

The scarier question is:

“Will AI make my work less valuable?”

That is exactly the kind of fear the Luddites felt.

Their craft did not disappear overnight. But its value was attacked. What once required skill, time, and experience could suddenly be made cheaper by machines.

Today, many knowledge workers feel the same shock.

What once took hours can now take seconds.

And when something becomes faster and cheaper, people naturally ask:

“What happens to the person who used to do that work?”

History Does Not Repeat Exactly. But the Questions Return.

History does not copy and paste itself.

We are not living in 1812.

We are not textile workers in England.

We are not breaking into factories at night with hammers.

But human fear has a way of returning in new clothes.

First, we are amazed by technology.

Then, we are excited by what it can do.

Then, we start to notice who benefits.

That is when the fear begins.

Technology always has two faces.

For some people, it is opportunity.

For others, it is a threat.

The Industrial Revolution created enormous wealth. It increased production, lowered the cost of goods, and changed the world. But for many workers living through it, progress did not feel like progress. It felt like losing control over their lives.

AI may follow a similar pattern.

It can help doctors diagnose diseases.

It can help students learn.

It can help small businesses do more with less.

It can help people write, build, research, and create faster than ever before.

But it can also concentrate wealth.

It can weaken labor.

It can pressure wages.

It can use creative work without fair recognition.

It can turn human skill into a cheap button.

So the most important question is not:

“Is AI good or bad?”

A better question is:

“Who benefits when AI becomes powerful?”

The Real Problem Is Not AI. It Is Ownership.

AI itself does not decide who gets rich.

People do.

Companies do.

Markets do.

Governments do.

Institutions do.

That is why the real issue is not only what AI can do, but who owns it, who controls it, and who profits from it.

If AI allows a company to produce more with fewer workers, where does the extra profit go?

Does it go to employees?

Does it go to customers?

Does it go to artists and writers whose work helped train the models?

Does it go to society through taxes and public services?

Or does it mostly go to shareholders and a small number of powerful technology companies?

This is where the Luddite question becomes modern again.

The Luddites asked:

“If machines create wealth, who should receive that wealth?”

Today, we must ask:

“If AI creates wealth, who should receive that wealth?”

This question matters more than ever because AI is not just another tool.

It is becoming infrastructure.

It may shape how we work, learn, search, communicate, create, and make decisions. When a technology becomes that powerful, leaving everything to the market is not neutral. It is a choice.

And often, that choice favors those who already have power.

We Do Not Need Hammers. We Need Better Questions.

The Luddites picked up hammers.

We need something else.

We need questions.

Not because questions are soft, but because questions shape laws, policies, companies, schools, and public debate.

We should ask:

How should the wealth created by AI be shared?

How should artists, writers, and creators be compensated when their work trains AI systems?

How do we help workers whose jobs are changed or weakened by automation?

What decisions should never be fully handed over to machines?

What should humans remain responsible for?

How do we make sure AI serves people instead of simply replacing them?

These questions are not anti-technology.

They are pro-human.

They do not say, “Stop AI.”

They say, “Do not let AI become another machine that creates wealth for a few while making everyone else more insecure.”

That is the lesson of the Luddites.

They failed to stop the Industrial Revolution.

But their question survived.

What Remains Human in the Age of AI?

As AI becomes more capable, we may need to rethink what human value means.

For a long time, many of us believed our value came from being productive.

How much can we write?

How fast can we code?

How many designs can we make?

How many reports can we complete?

But AI is very good at speed.

It can produce more than we can.

It can work longer than we can.

It does not get tired, bored, or anxious.

So if we compete with AI only on speed and output, we may lose.

But humans are not only output machines.

Humans ask why.

Humans care about meaning.

Humans feel responsibility.

Humans understand pain, dignity, trust, and consequence.

Humans can decide that just because something is efficient does not mean it is right.

AI can generate answers.

But humans must decide which questions matter.

AI can write a sentence.

But humans must decide whether that sentence is honest, kind, useful, or harmful.

AI can analyze data.

But humans must decide what values should guide the use of that analysis.

In the age of AI, the most important human skills may not only be technical skills.

They may also be empathy, ethics, judgment, imagination, communication, and responsibility.

The smarter machines become, the more human we may need to become.

The Question From 200 Years Ago Is Back

Brian Merchant’s Blood in the Machine makes the Luddites feel less like a strange group from the past and more like a warning from history.

Two hundred years ago, workers looked at machines and feared that their skills would no longer matter.

Today, we look at AI and wonder whether our knowledge, creativity, and judgment will still matter.

They heard the sound of factory machines.

We hear the quiet hum of servers.

They watched their craft lose value.

We watch AI generate work that once required years of training.

They asked:

“What happens to us?”

We are asking the same thing.

The lesson of the Luddites is not that we should smash machines.

The lesson is that we should pay attention when technology creates wealth but people feel poorer, weaker, and more replaceable.

AI may become one of the most powerful tools humanity has ever built.

But tools do not automatically create justice.

People do.

Laws do.

Institutions do.

Collective choices do.

So maybe the question is not whether AI will change the world.

It already is.

The real question is:

Who will AI change the world for?

Two hundred years ago, people smashed machines because they felt no one was listening.

Today, we still have a chance to listen before the fear turns into something louder.

We do not need to fight AI.

But we do need to decide what kind of future AI is allowed to build.